Agents Are Starting to Operate Systems

Over the past few years, AI has primarily generated content. That is changing.

Agents are now operating inside enterprise systems. They write code. Query data. Trigger pipelines. Call APIs. Create databases. We no longer ask AI “what, where, or how.” We now instruct it to execute and take action. AI systems can use tools, read files, decompose problems, and operate directly inside enterprise systems. Interact with SaaS and developer platforms.

Agents Will Proliferate

Enterprises are entering a world where agents will rapidly proliferate. They will be embedded across:

- developer environments

- data platforms

- SaaS applications

- enterprise workflows

- copilots and automation systems

A single organization may soon run hundreds or thousands of agents. In many environments, agents will outnumber humans. And they will not just observe systems. They will operate them.

Agents Act Through Tools

Agents deliver outcomes through tools — tools already embedded in enterprise environments.

- SQL queries

- APIs

- pipelines

- databases

- SaaS connectors

- cloud resources

- MCP tools

When an agent uses a tool, it is no longer generating text. It is taking action inside enterprise systems. Those actions interact with critical control surfaces:

- data — what is accessed or modified

- identity & permissions — who is allowed to act

- credentials — how actions are executed

- infrastructure — where actions run

Tools turn AI reasoning into real-world enterprise activity.

The Agentic AI Blind Spot

Today, most organizations cannot answer basic questions:

- What data did the agent access?

- Which identity executed the action?

- What tools were used?

- What actions were triggered?

- Which policies governed the workflow?

This is not because enterprises lack governance. They have many systems of record:

- IAM systems

- cloud platforms

- data governance tools

- ticketing systems

But these systems were designed for humans and applications. Not for autonomous agents orchestrating tools across the enterprise. This creates a new operational blind spot: Agentic AI activity without enterprise control.

Every New Layer Requires a Control Plane

Every major computing layer introduced a control plane:

- cloud → cloud control planes

- containers → Kubernetes

- identity → identity control planes

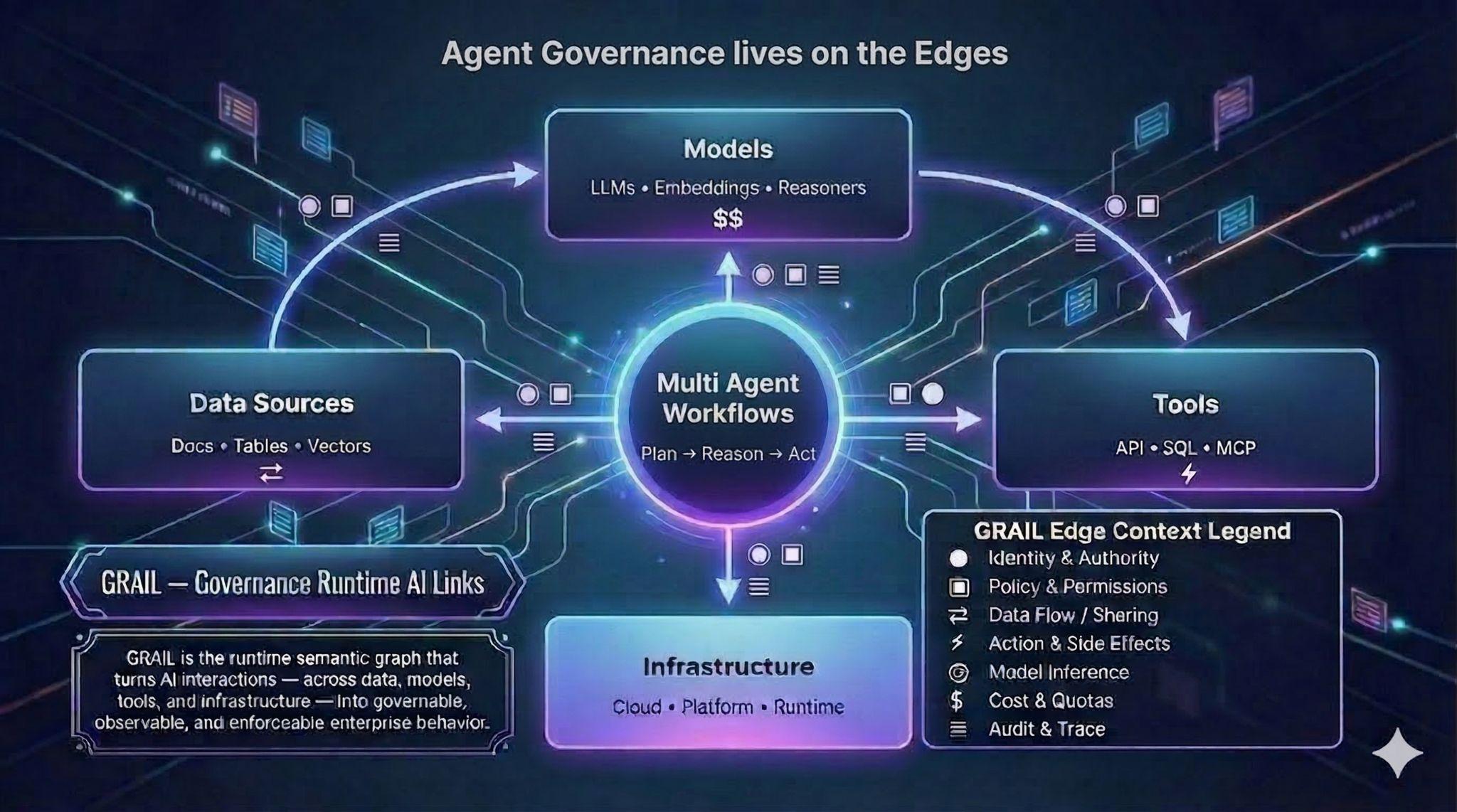

Agentic AI introduces a new operational layer: A layer where agents interact with:

- models

- data

- tools

- infrastructure

- enterprise systems

This layer requires a way to:

- observe

- govern

- control

AI behavior across the enterprise.

Introducing LangGuard

LangGuard is an AI Control Plane designed to help enterprises safely operate agentic workflows in production.

LangGuard provides:

- runtime visibility into AI activity

- policy enforcement across agents, tools, and data

- governance anchored in enterprise systems of record

- continuous monitoring and remediation of agent workflows

This enables organizations to answer a critical question: Why is the agent doing this? If you cannot explain what an AI system is doing, you cannot trust it. And if you cannot control it, you should not run it in production.

A Simple Philosophy

We are launching LangGuard with a simple philosophy: Don’t trust marketing. Try the product.

If you are building or deploying AI agents and want to understand what is actually happening inside your workflows, we invite you to try LangGuard.