Whenever a new tech makes a big splash, the first thing people do is look for reasons to say it is over. It happened with the web, it happened with iPods and then smartphones, it happened with the cloud, and now it is happening with MCP. If you spend enough time in tech circles, you have already heard the cries that “MCP is dead!” , or that it will be replaced by the CLI, often with the justification that “openclaw does not use it so why should anyone else!”

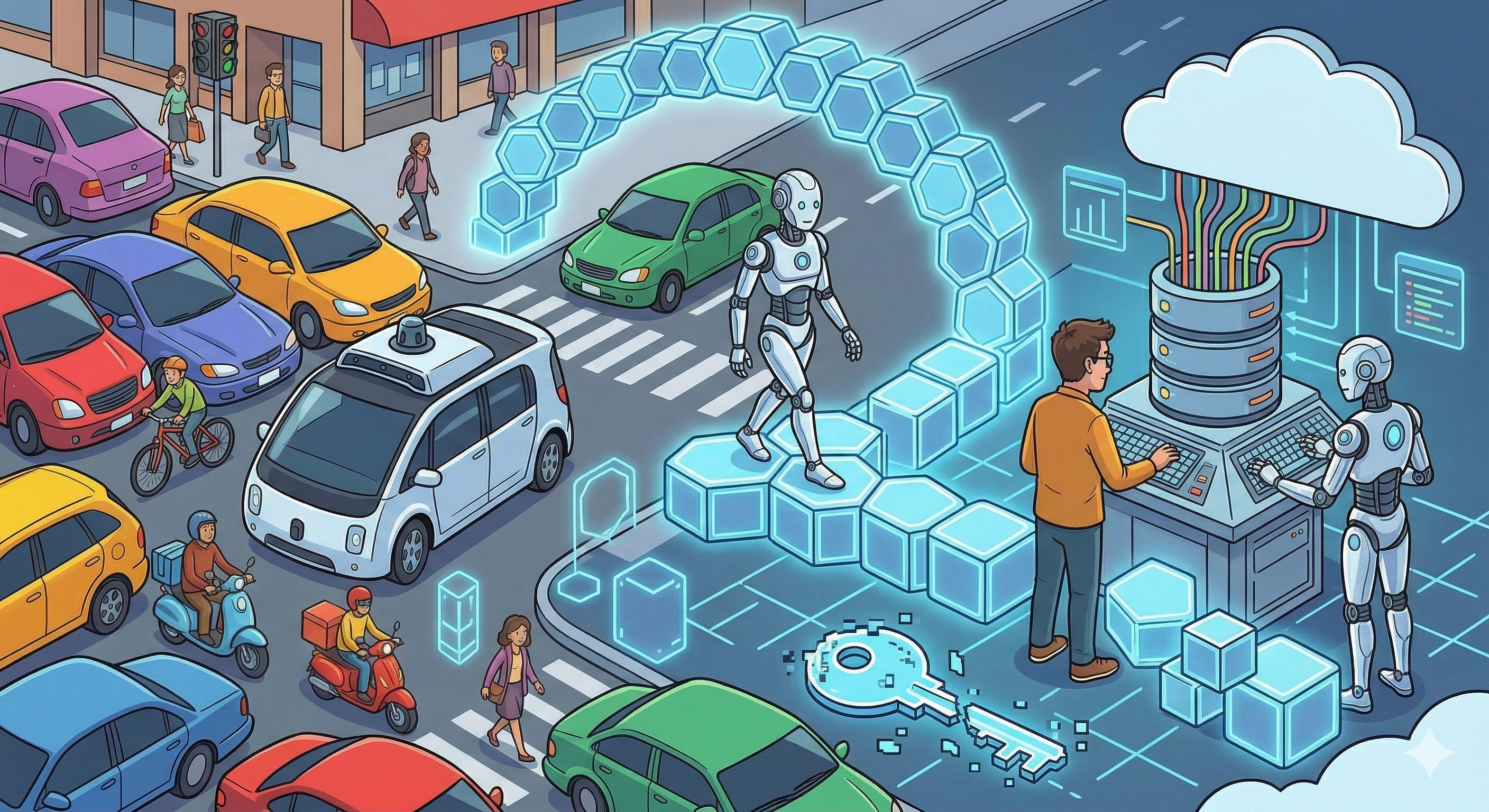

But that handwaves the last century of engineering. Change does not happen by deleting the old world and replacing it with a new one overnight. It happens through long, often messy periods of transition where the new has to learn to live with the old. MCP is not actually about code or servers. It is about how machines and humans share space. If we want to understand why MCP is not going anywhere, you don’t have to look any further than the history of the self-driving car.

We have a habit of thinking that if we want a machine to act on our behalf, we should build a separate, perfect environment for it. We think we can just separate the machines from the humans and let them run on their own tracks. But the history of technology shows that this almost never works. The real world is full of people, and if an AI agent is going to be useful, it has to be able to navigate the same tools, websites, and data sources that we use every day.

The Futurama Fallacy and the Dream of Empty Roads

In 1939, at the New York World’s Fair, General Motors built an exhibit that would become the most popular show in the fair’s history. It was called Futurama, and it was a massive, one-acre model of what America might look like in 1960. People sat in moving chairs and looked down at a miniature world that promised a life of leisure and efficiency. The centerpiece of this vision was a network of automated highways where cars drove themselves without any human input. It was the first time the general public was introduced to the concept of self-driving.

The designer of Futurama, Norman Bel Geddes, believed that the secret to autonomy was to remove the human element entirely from the process of driving. In his vision, cars would not have to deal with the unpredictability of human reflexes or the messiness of city streets. Instead, they would run on dedicated motorways where they were guided by radio control and electromagnetic fields. He thought we would build special lanes where cars were locked into a system of buried magnets or metal spikes that kept them centered and moving at high speeds. The cars were essentially on tracks, like a digital train system.

This is the exact same mistake many people make today when they talk about AI agents using CLIs. They assume that for an AI to be effective, it needs its own “empty road” and not have to deal with the messiness that humans bring. But just like the dream of the automated highway in 1939, this vision fails to account for the massive amount of existing infrastructure we already have. We cannot just tear up the digital roads of the world to make room for the robots. The cars have to learn to drive on the roads we already have.

The Mixed Traffic Challenge

| Development Phase | Self-Driving Car Evolution | AI Agent Evolution |

|---|---|---|

| Initial Vision | Dedicated roads and radio control (1939) | Proprietary, hard-coded integrations |

| Early Testing | Buried wires and magnetic sensors (1957) | Application-specific bots and scripts |

| The “Mixed” Challenge | Sharing the road with human drivers | Agents using human-centric software |

| Scalable Solution | On-board vision and standardized protocols | MCP and the browser-based WebMCP |

The hardest part of the self-driving car journey has not been making the car drive; it has been making the car drive around people. In the industry, this is known as “mixed traffic.” We are currently entering the “mixed traffic” era of software. For the next decade or more, AI agents and humans are going to be using the same applications. If a human assistant and an AI assistant are both helping a manager book travel, they are both going to be looking at the same flight booking sites and the same internal company calendars. We are not going to move all of that into a hidden machine-only layer because humans still need to see it, verify it, and interact with it. Change does not happen by suddenly cutting off the human interface; it happens by allowing the machine to participate in the human workflow.

This is why the WebMCP and MCP is so vital. It is what allows an agent to enter the human world with some level of structure. Without it, an AI is forced to interact with a website like a “digital ghost,” scraping the screen and guessing what buttons do based on visual layout. This is slow, expensive, and breaks every time the website updates its design. MCP changes this by letting the application tell the agent exactly what tools are available and what inputs they need, while still maintaining the visual interface that the human user requires. This coordination between human and machine is the key to making AI actually useful in a professional setting, and WebMCP is the fuel that is going to make it go mainstream. Recently, Google introduced an early preview of WebMCP in Chrome 146. WebMCP is a massive shift because it moves the protocol client-side directly into the browser, allowing any website to become its own MCP server without needing a separate backend or a complex server-to-server connection. The browser itself becomes the orchestrator between the human, the website, and the AI agent.

This is the ultimate evolution of the “mixed traffic” solution. It acknowledges that the web is still a place for people, but it adds a layer of structure that machines can use reliably. It eliminates the need for fragile screen scraping and replaces it with a standardized way for an agent to ask the browser, “What can I do on this page?”

The Openclaw Myth and the Freedom of the Terminal

Some folks look at projects like Openclaw and think the MCP is dead before it even started. Openclaw skips the protocol by giving the agent full terminal access and letting it build its own tools on the fly. It is like giving a chef a whole grocery store and a stove instead of a menu. If the agent can just write the code to talk to an API, why do we need a standard protocol? This has led to a few loud voices declaring that the protocol is already dead because the model is smart enough to figure it out on its own.

But this shortcut comes with some heavy baggage that most businesses just cannot carry. Giving an agent full access to a terminal is a massive security nightmare because the agent inherits every permission the user has. On top of that, having an agent build its own tools every time is a waste of energy. It burns through tokens and takes way longer than just using a pre-defined tool that already knows how to do the job.

The final issue is that this build-it-yourself approach assumes the whole world is perfectly documented. Openclaw works great when it is calling a public API with a clean manual, but that is not the actual situation in most companies. Most internal data is trapped in messy, undocumented legacy systems or private databases that don’t have a public help page. Without a protocol to provide that context, the agent is just guessing in the dark. The protocol isn’t a limitation; it’s the map that allows the agent to function in a world that wasn’t built for it.

The MCP Revolution is Just Beginning

MCP is not a passing trend because the problem it solves is not a temporary one. As long as humans and machines share a digital workspace, we will need a way to bridge the gap between human intuition and machine precision. The history of the self-driving car shows us that you cannot just bypass the humans; you have to learn to work with them. You have to build bridges, not just tracks.

By using standards like MCP and WebMCP, we are giving our agents the ability to see what we see and do what we do, but with the speed and efficiency that only a machine can provide. And as we secure these workflows with platforms like LangGuard.AI, we are ensuring that this transition is as safe as it is productive. The road to autonomy is long, but with the right protocol, we are finally headed in the right direction.