Most teams start their AI journey by playing around with a few personal API keys, but things get messy fast as you start to scale. You often end up with a dozen different models and no clear way to manage them all. This is why an AI Gateway is becoming a standard part of the modern tech stack.

What Is An AI Gateway?

Think of an AI Gateway as a central hub that sits between your applications and your AI models. It ensures every request follows the same rules, regardless of which model is being used. A recent Deloitte report points out that succeeding with AI at scale depends on having an organized foundation to connect and govern these interactions.

Why Use An AI Gateway?

You cannot manage what you cannot see, and in the AI world, this visibility is called observability of inference. Inference is basically the process where an AI takes a prompt and generates an answer. If you have teams across the company using different models, tracking costs and performance becomes a major headache. A gateway fixes this by gathering telemetry, which is just a term for automatically collecting data on how the system is behaving. As the LangGuard blog mentions, having this level of visibility is the only way to move from small experiments to reliable production systems.

Things get even more interesting when you start using AI agents that can actually perform tasks, like looking up data or sending emails. This is usually done through the Model Context Protocol, or MCP. Think of MCP as a universal language that lets an AI talk to external tools in a consistent way. Since these agents have more power to act, you really need to keep an eye on them. An MCP gateway that understands these tool calls keeps a record of every action the AI takes, so you always know why it made a specific decision.

How Gateways Add Enforcement

Watching what happens is a great start, but sometimes you need to step in and stop a mistake before it happens. This is enforcement, and doing it at the gateway level comes with advantages. Instead of asking every developer to write their own security checks, you can set one rule at the gateway level. For instance, you could block any response that accidentally includes sensitive customer data. Analysts at Gartner have noted that this kind of central control is a huge priority for companies trying to balance speed with safety as they grow their infrastructure.

This kind of safety is a big deal when agents have access to your internal systems. If an AI has permission to delete a file or move data, you want a second pair of eyes on that action. The gateway acts as a safety net, checking every tool call against your company policies. This helps avoid accidents and gives you an immutable audit trail. An audit trail is a permanent record of actions that cannot be changed later, which is a lifesaver when you need to prove that your systems followed the rules.

Announcing: LangGuard AI Enforcement

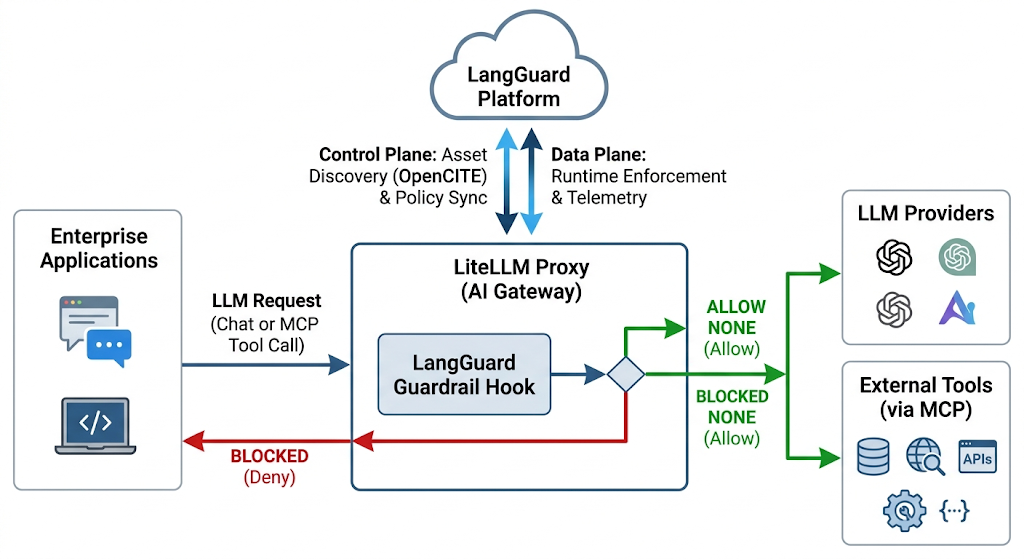

To make all of this easier, we have built a deep integration between LangGuard and LiteLLM. If you are not familiar with it, LiteLLM is a popular tool that lets you connect to hundreds of different AI models through one simple interface. By pairing it with the LangGuard platform, you get a full setup for both watching and controlling your AI traffic. It is an easy way to stay flexible with your model choices without giving up the oversight you need in a professional environment.

Setting up the integration is designed to be as simple as possible. Once you connect the two, LangGuard will automatically find your models, MCP servers, and tools, keeping your AI asset inventory updated automatically as you add new AI assets, so you do not have to spend time on manual updates or spreadsheets to keep track of everything.

The most helpful part of this setup is the automatic guardrails. LangGuard can deploy security policies directly to your LiteLLM proxy so they run in real time. If an AI request looks suspicious or violates a rule, the gateway blocks it immediately. Security is the core focus here, which is why we used a fail-closed design. This means that if the connection between the systems ever drops, the gateway will block AI traffic by default until it is restored - it is much better to have a temporary pause than to let unprotected data slip through. We also added a permissive mode. This lets you test out new security rules by receiving an alert instead of blocking the user, which is a great way to try out a new policy before you fully commit to it.

Bringing Sanity To AI Governance

Combining LangGuard and LiteLLM gives you the infrastructure to build AI applications that are safe and easy to monitor. You can use the latest models while knowing your data is protected and your costs are tracked. Our goal is to help you focus on building great products while we take care of the governance and monitoring. This is a big step toward making AI work reliably for everyone involved in your organization.

How is your organization planning to manage the growing complexity of your AI tool connections this year?